Measuring without understanding “puts the cart before the horse”. If we measure something that is unclear or based on wrong assumptions, we are likely to end up with irrelevant data and or misleading conclusions. It is crucial, therefore, that we understand before we decide what to measure or, indeed, whether to measure at all. This was the main message by Silva Ferretti, the freelance evaluation consultant who opened the third EvalForward Talks, who astutely pointed out that this was not always the case and should be common practice in evaluation.

The EvalForward Talks session underscored the need to reaffirm understanding as an evaluation priority and discussed why we are too often confronted by calls to measure first and foremost, the risks of overemphasizing the role of measurement and how to address these challenges in practice. Silva opened the discussion by sharing her rationale for and experience in addressing the excessive importance often attached to measurement.

Here are some of the highlights of the wealth of dialogue that ensued among participants.

The rationale behind understanding first

“Whatever the programme, whatever the outcome, immediately someone says: ‘Oh, we must make sure we measure it!’, almost like an obsessive measurement disorder,” Silva said.

We first need to capture and understand the dynamics at play, see what the changes look like and what is shaping them. Once this is clear, we can decide which aspects of these changes are worth measuring. If we start by measuring, the measure itself will be compromised by the many assumptions made. It seems such a simple and obvious concept, yet in practice, it is not systematically applied. As Silva explained, understanding is scary, because it means considering complexities. Nonetheless, is it not at the core of every evaluator’s work to understand connections, dynamics and influencing factors before deciding on the measures that are meaningful for that specific project or programme?

To illustrate the concept, Silva cited the obesity system map developed by the Office of Science of the UK government’s Foresight Programme. The map shows the different variables underlying obesity, grouped into thematic clusters, as well as their positive and negative interlinkages. Strong comprehension led to the construction of an appropriate theory of change: understanding the dynamics we need to tackle in order to spur change, unpacking them and, eventually, measuring some aspects.

Silva also pointed to the evaluation of a resilience programme as another good example of why it is important to understand before we measure. To build an effective theory of change, it is important to be able to move from an initial framework of elements to factors of change (which lie behind every element of the framework), linkages (how are they connected), systems and some measures.

We are not discussing different methodological approaches here, in a binary mindset of quantitative versus qualitative evaluation. It is about making sure that all parties involved in an evaluation are aware of the relevant dimensions that need to be addressed and are clear about their meaning and underlying complexities. How to assess them is an entirely separate question.

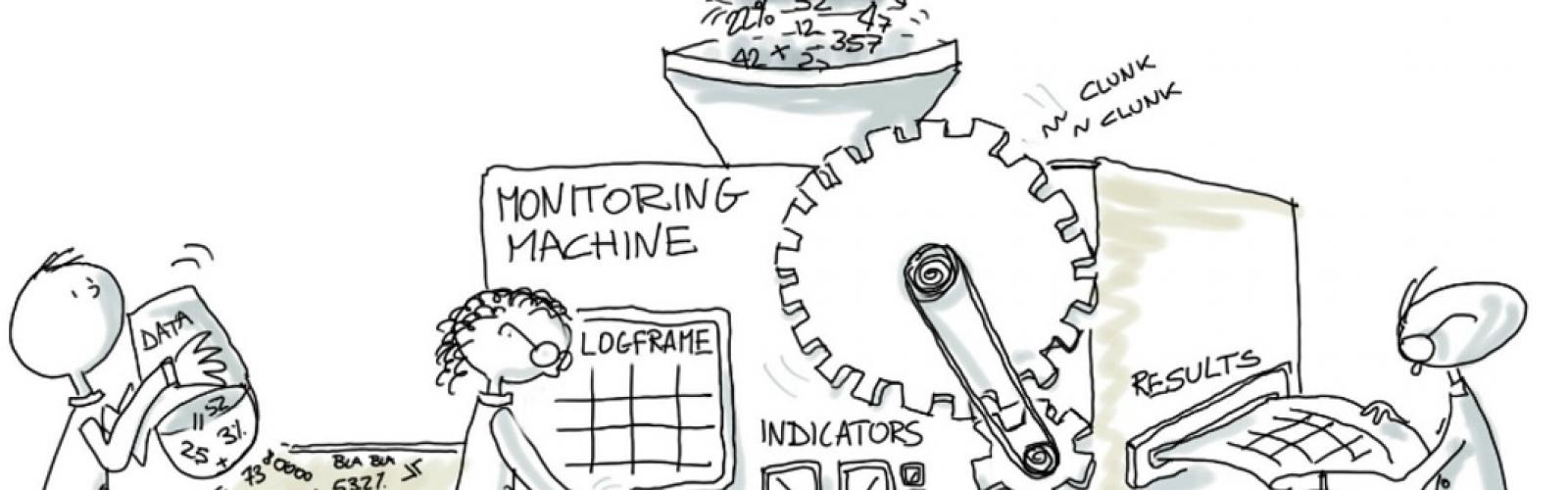

Link to Silva’s presentation here (and enjoy the lovely drawings).

Where are these calls for measurement coming from and how do we recognize them?

So why are the calls for measurement so strong and pervasive, and why is the process of measuring often accepted without question? Some of the dynamics and issues raised by participants are:

- The urgency of and focus on reporting times and deadlines often limit the potential for reflection and space for understanding.

- There is an idea that accountability and credibility are based on numerical data, hence the urge to go out and start collecting and producing data without carefully considering who is using it and how it is being used. “There is no sense to data produced for accountability,” said one participant.

- Project proposals, themselves, are sometimes based on a set of actions without any understanding of the underlying dynamics. What do we mean by obesity? Which dimensions do we need to capture so that policies can and will be developed based on the evidence produced?

- Measures of quantitative and qualitative data are often seen as the only way to communicate results: why do we feel we have to provide numbers to be considered credible?

- Measurements and the process of measurement are accepted without question. Indicators may be positive when, in reality, they are not and deeper analysis may be needed.

- The common tendency to overemphasize measurement makes evaluation difficult to understand and may simply be flawed or misleading.

- Some donors focus on measures and targets, but there are others that just give directions and leave it to implementers to decide how to progress. This is a much more open approach to learning and understanding.

Example: Measuring engagement with a local community by the number of participants in a specific workshop. We all know that this number does not say much, as, on the one hand, the participants may go home and enthusiastically share what they have learned with their neighbours and the rest of the community, while, on the other hand, they may simply attend to receive some kind of compensation, but are not interested in learning or applying what they have heard. There are innumerable reasons why people might attend. So why are we still reporting such sterile numbers? What do we actually want to know about the community engagement?

Whose understanding matters?

Participants also noted a tendency to overlook the understanding of the people involved in a project. The design of a project should incorporate the understanding and perspectives of all participants and stakeholders, who can then share their understanding, be it through pictures, videos or other tools. Project staff also frequently struggle to get their voice and understanding heard.

What can we do about it?

Participants shared their experience:

- Using a mixed-method approach improves the chances of taking diverse structures and systems into consideration and get to the root of the matter.

- Maps can be a useful way of identifying and understanding connections. They also help to communicate results with partners (use an iterative process: map, collect data, then map again and measure). Of course, understanding makes it easier to communicate and pass on the message, it allows us to be specific and get away from jargon and fancy vocabulary with no true meaning.

- Participatory approaches produce evidence and information on key dynamics that would not be available otherwise and foster collaborative understanding, enabling beneficiaries to understand and evaluators to facilitate this. We should encourage them.

- We should change the mindsets of donors and other stakeholders by advocating more for an emphasis on understanding and the quality of results.

- We should use approaches such as outcome mapping or outcome harvesting to identify drivers of change, and build a complex system of connections so as to understand results.

- Evaluators should not shy away from reaffirming the need to understand, as this is core to evaluation.

- Remember the principles of utilization-focused evaluation developed by Patton,[1] whereby evaluations should be conducted in such a way as to enhance the use of their findings and the evidence produced.

- Even if the terms of reference seem inflexible, it is the evaluator’s job to clarify them. We may feel that donors want a classic approach, but they may be open to new perspectives if we present and argue them well.

“The cost of knowledge lost is what we need to start thinking of,” said one participant.

Click here for a board capturing the main challenges and solutions shared by participants.

This is not an exhaustive summary of the discussion and the many thoughts shared. Feel free to comment using the chat box below, or email info@evalforward.org.